|

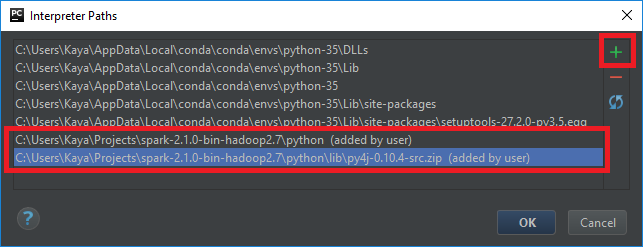

Type "help", "copyright", "credits" or "license" for more information. Configure P圜harm to use Anaconda Python 3.5 and PySpark 1. Next, we need to install pyspark package to start Spark programming using Python. Now that we have all the environments set, let us go to Spark directory and invoke PySpark shell by running the following command − Once conda is installed by installing either Anaconda or Miniconda, other software packages may be installed directly from the command line with conda install. Then run the following command for the environments to work. Or, to set the above environments globally, put them in the. Before starting PySpark, you need to set the following environments to set the Spark path and the Py4j path.Įxport SPARK_HOME = /home/hadoop/spark-2.1.0-bin-hadoop2.7Įxport PATH = $PATH:/home/hadoop/spark-2.1.0-bin-hadoop2.7/binĮxport PYTHONPATH = $SPARK_HOME/python:$SPARK_HOME/python/lib/py4j-0.10.4-src.zip:$PYTHONPATH It will create a directory spark-2.1.0-bin-hadoop2.7. which we would need to install fastparquet using pip, esp. # tar -xvf Downloads/spark-2.1.0-bin-hadoop2.7.tgz The last command would install gcc, flex, autoconf, etc.

By default, it will get downloaded in Downloads directory. Step 2 − Now, extract the downloaded Spark tar file. In this tutorial, we are using spark-2.1.0-bin-hadoop2.7. Step 1 − Go to the official Apache Spark download page and download the latest version of Apache Spark available there. This new environment will install Python 3.6, Spark and all the dependencies. Begin with the installation process: Getting Started: Getting through the License Agreement: Select Installation Type: Select Just Me if you want the software to be used by a single User. Make sure to download the Python 3.7 Version for the appropriate architecture. Enter the command pip install sparkmagic0.13.1 to install Spark magic for HDInsight clusters version 3.6 and 4.0. Head over to and install the latest version of Anaconda. Following is a detailed process on how to install PySpark on Windows/Mac using Anaconda: To install Spark on your local machine, a recommended practice is to create a new conda environment. See also, Installing Jupyter using Anaconda. Let us now download and set up PySpark with the following steps. How to Install PySpark on Windows/Mac with Conda. Note − This is considering that you have Java and Scala installed on your computer. In this chapter, we will understand the environment setup of PySpark.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed